I’ve been wondering what the diversity of knowledge of StackOverflow users would be like. It seemed like an interesting research idea to see how many people have responded only to questions in a very narrow field, and how many others have broader knowledge and can contribute useful answers in more diverse fields. Apparently, there is even supposed to be a badge for that (the Generalist badge), but it didn’t get implemented yet.

It’s easy to do this using tags: some sort of clustering should be applied according to how often each pair of tags shows up at the same question (a user that knows both ASP and ASP.net shouldn’t be considered a ‘diverse’ person, so this should be factored out first), next we can count in how many different clusters that this user has contributed a good answer.

I’ve had a stab at trying this on the last (October 2009) StackOverflow public data set. I’ve ignored the SU and SF parts of the dump. The idea is to count how many of SO’s questions you could conceivably answer, given your proficiency in each of the tags of that question.

First I’ve scored all answers each user has given. 20 points go to it being the accepted answer, another 80 points are distributed over all answers to a question in relation to the answer’s votes. This is not exactly the same as the reputation earned for that answer, since popular questions get a lot more up-votes to their answers, but in this analysis this isn’t really worth much more than a good answer to an unpopular question.

The points for all your answers are distributed over the tags with which the question is tagged. This gives each user a number of points for all tags. These points are converted into a proficiency that user has for this tag: proficiency = 1 - exp(-points / 500). So 1000 points (10 very good answers) gets you to 86%, more points will asymptotically get you to 100%.

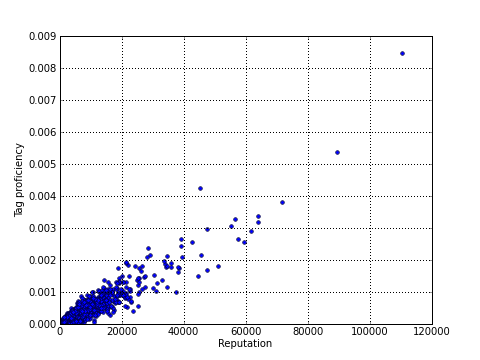

At this point we can compute the average proficiency over all tags for each user, which is plotted in this graph (average tag proficiency versus reputation, for users with reputation > 1000):

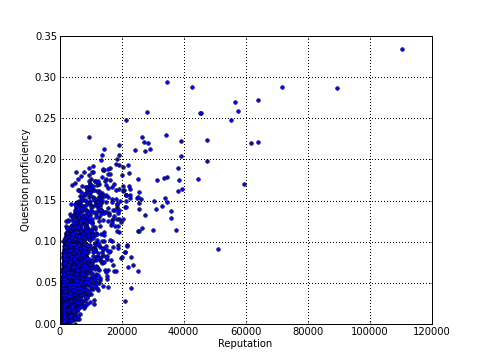

The next step is to compute the question proficiency. This is done, for each question and each user, by taking the geometric average of this user’s tag proficiencies over all tags that this question is tagged with (I’ve choosen the geometric average rather than the arithmetic one, since you’re supposed to be knowledgable on all components of a question in order to answer it). These per-question proficiencies can again be averaged (arithmetically), yielding the user’s average question proficiency which is a measure for how many of the site’s questions he/she could answer. This graph plots average question proficiency versus reputation:

Note that this last one is a fairly heavy query (~1 second per user), so it could more feasible to base an actual implementation on tag proficiency only (see also this graph of tag vs. question proficiency), although this would overvalue knowledge of topics SO doesn’t care much about.

Analysing the question proficiency vs. reputation graph, we see that both are clearly related (if you answer enough questions, you’re bound to have covered most of the tags). Still, some users reach a possible threshold value of 20% question proficiency at a reputation of only 10,000; while others couldn’t get there even at 60,000 reps.